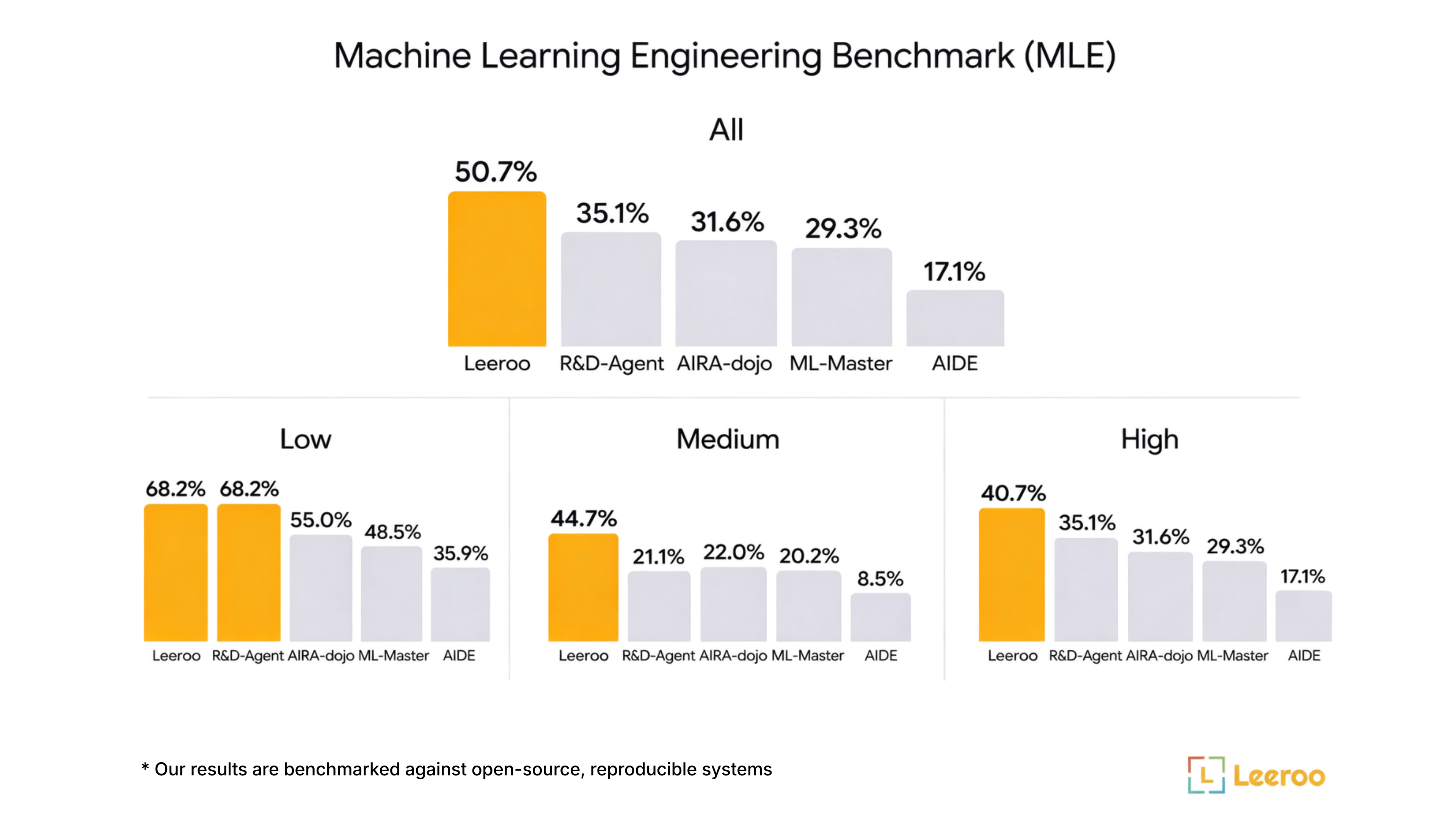

MLE-Bench is a benchmark for evaluating ML engineering agents on Kaggle competitions. Kapso achieved #1 among open-source systems on this benchmark.

Usage

# List available competitions

PYTHONPATH=. python -m benchmarks.mle.runner --list

# List lite benchmark competitions

PYTHONPATH=. python -m benchmarks.mle.runner --lite

# Solve a competition

PYTHONPATH=. python -m benchmarks.mle.runner -c tabular-playground-series-dec-2021

# With options

PYTHONPATH=. python -m benchmarks.mle.runner \

-c tabular-playground-series-dec-2021 \

-i 20 \

-m MLE_CONFIGS \

-d aider

CLI Options

| Option | Description | Default |

|---|

-c, --competition | Competition ID | Required |

-i, --iterations | Max experiment iterations | 20 |

-m, --mode | Config mode | MLE_CONFIGS |

-d, --coding-agent | Coding agent | From config |

--no-kg | Disable knowledge graph | Enabled |

--list | List all competitions | - |

--lite | List lite competitions | - |

--list-agents | List coding agents | - |

Configuration Modes

MLE-Bench uses benchmark_tree_search strategy which uses the handler’s built-in evaluation via handler.run(). This is different from kapso.evolve() which uses agent-built evaluation.

Production configuration with full features.search_strategy:

type: "benchmark_tree_search"

params:

reasoning_effort: "high"

code_debug_tries: 15

node_expansion_limit: 2

coding_agent:

type: "aider"

model: "o3"

knowledge_search:

enabled: true

Fast configuration for testing.search_strategy:

type: "benchmark_tree_search"

params:

reasoning_effort: "medium"

code_debug_tries: 2

coding_agent:

type: "aider"

model: "gpt-4.1-mini"

knowledge_search:

enabled: false

Stages

The handler automatically adjusts strategy based on budget progress:

| Stage | Budget | Behavior |

|---|

| MINI TRAINING | 0-35% | Sample training data (for datasets >30GB) |

| FULL TRAINING | 35-80% | Train on complete dataset |

| FINAL ENSEMBLING | 80-100% | Ensemble best models from history |

Output Structure

The agent generates:

experiment_workspace/{uuid}/

├── main.py # Entry point

├── output_data_{branch}/

│ ├── final_submission.csv # Kaggle submission file

│ └── checkpoints/ # Model checkpoints

└── sessions/ # Experiment branches

Code Requirements

Generated code must:

- Support

--debug flag for fast testing

- Write

final_submission.csv in the output directory

- Print progress and metrics

- Handle GPU efficiently (batch size, device selection)

- Use early stopping and learning rate scheduling

Competition Types

| Type | Examples |

|---|

| Tabular | tabular-playground-series-* |

| Image | dogs-vs-cats-*, plant-pathology-* |

| Text | spooky-author-identification, jigsaw-toxic-* |

| Audio | mlsp-2013-birds |

Environment Variables

| Variable | Default | Description |

|---|

CUDA_DEVICE | 0 | GPU device ID |

MLE_SEED | 1 | Random seed |